-

Notifications

You must be signed in to change notification settings - Fork 374

Commit

This commit does not belong to any branch on this repository, and may belong to a fork outside of the repository.

[CELEBORN-1792] MemoryManager resume should use pinnedDirectMemory in…

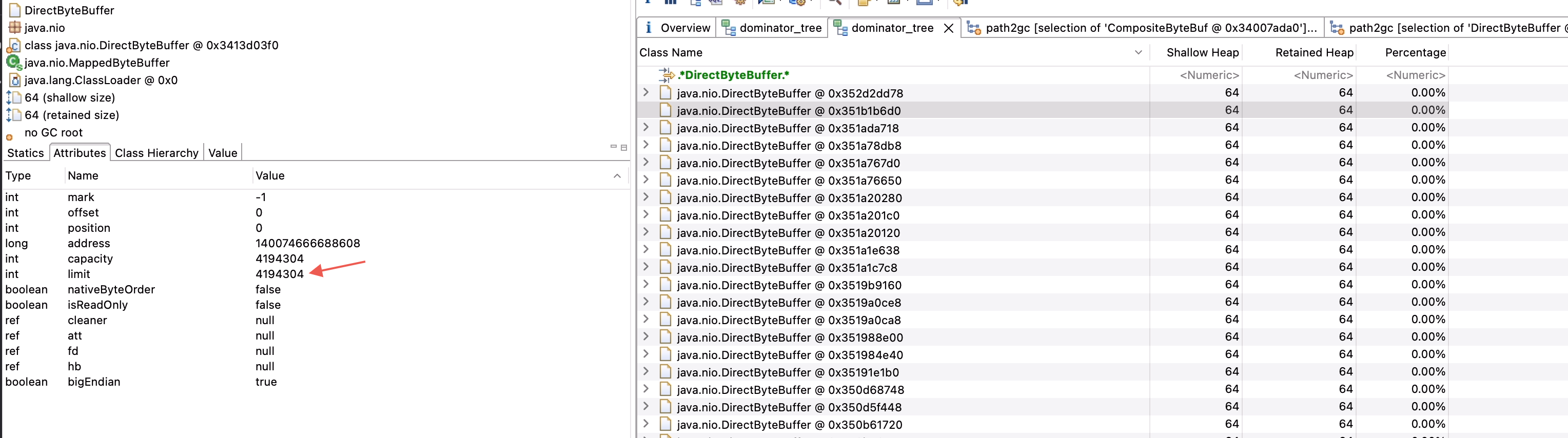

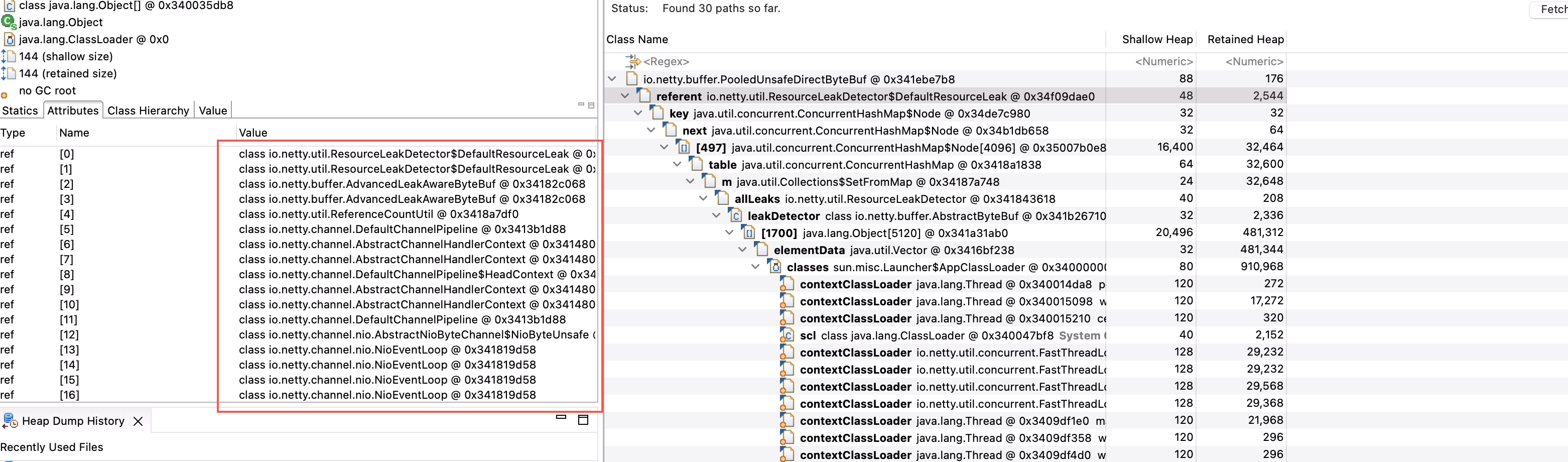

…stead of usedDirectMemory ### What changes were proposed in this pull request? Congestion and MemoryManager should use pinnedDirectMemory instead of usedDirectMemory ### Why are the changes needed? In our production environment, after worker pausing, the usedDirectMemory keep high and does not decrease. The worker node is permanently blacklisted and cannot be used. This problem has been bothering us for a long time. When the thred cache is turned off, in fact, **after ctx.channel().config().setAutoRead(false), the netty framework will still hold some ByteBufs**. This part of ByteBuf result in a lot of PoolChunks cannot be released. In netty, if a chunk is 16M and 8k of this chunk has been allocated, then the pinnedMemory is 8k and the activeMemory is 16M. The remaining (16M-8k) memory can be allocated, but not yet allocated, netty allocates and releases memory in chunk units, so the 8k that has been allocated will result in 16M that cannot be returned to the operating system. Here are some scenes from our production/test environment: We config 10gb off-heap memory for worker, other configs as below: ``` celeborn.network.memory.allocator.allowCache false celeborn.worker.monitor.memory.check.interval 100ms celeborn.worker.monitor.memory.report.interval 10s celeborn.worker.directMemoryRatioToPauseReceive 0.75 celeborn.worker.directMemoryRatioToPauseReplicate 0.85 celeborn.worker.directMemoryRatioToResume 0.5 ``` When receiving high traffic, the worker's usedDirectMemory increases. After triggering trim and pause, usedDirectMemory still does not reach the resume threshold, and worker was excluded.  So we checked the heap snapshot of the abnormal worker, we can see that there are a large number of DirectByteBuffers in the heap memory. These DirectByteBuffers are all 4mb in size, which is exactly the size of chunksize. According to the path to gc root, DirectByteBuffer is held by PoolChunk, and these 4m only have 160k pinnedBytes.   There are many ByteBufs that are not released  The stack shows that these ByteBufs are allocated by netty  We tried to reproduce this situation in the test environment. When the same problem occurred, we added a restful api of the worker to force the worker to resume. After the resume, the worker returned to normal, and PushDataHandler handled many delayed requests.   So I think that when pinnedMemory is not high enough, we should not trigger pause and congestion, because at this time a large part of the memory can still be allocated. ### Does this PR introduce _any_ user-facing change? No. ### How was this patch tested? Existing UTs. Closes #3018 from leixm/CELEBORN-1792. Authored-by: Xianming Lei <[email protected]> Signed-off-by: Shuang <[email protected]>

- Loading branch information

Showing

5 changed files

with

224 additions

and

49 deletions.

There are no files selected for viewing

This file contains bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters

This file contains bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters

This file contains bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters

This file contains bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters

Oops, something went wrong.